|

11/28/2020 0 Comments Backpropagation Neural Network Python

In addition for every gradients to assess, we would have got to compute the loss functionality at minimum once (carrying out one forward pass by dumbbells and biases).Regrettably, these equipment have a tendency to abstract the hard part away from us, and we are then enticed to skip out on the knowing of the internal mechanics.In particular, not knowing backpropagation, the bread and butter of serious learning, would most probably lead you to terribly design and style your networks.

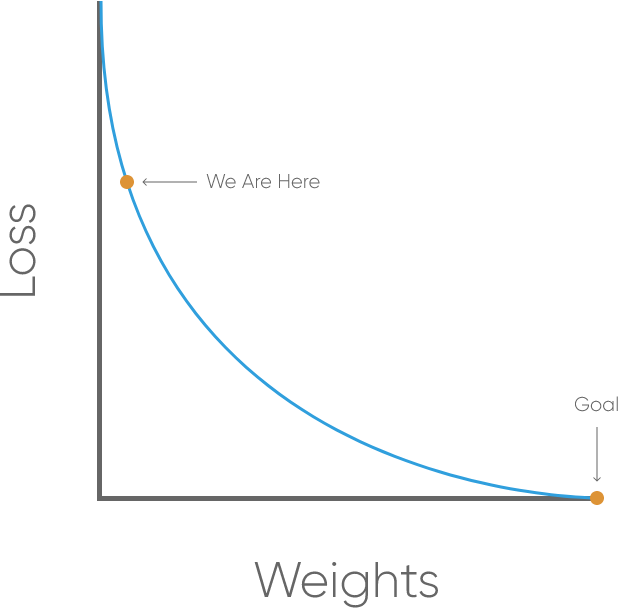

In a Moderate content 2, Andrej Karpathy, now director of AI at Tesla, shown few factors why you should understand backpropagation. Problems like as disappearing and exploding gradients, or coloring relus are usually some of them. Backpropagation is definitely not a really complicated algorithm, and with some information about calculus specifically the chain guidelines, it can be understood fairly quick. In specific, neural networks works this mapping by processing the input through a place of changes. A neural network is made up of several levels, and each of these layers are produced of systems (furthermore known as neurons) as created below. Each modification is managed by a collection of dumbbells (and biases). During training, to indeed find out something, the system requires to adapt these dumbbells to reduce the mistake (also called the loss functionality) between the anticipated results and the ones it maps from the provided inputs. Making use of gradient ancestry 3 as an optimisation criteria, the dumbbells are updated at each iteration as. The gradient can end up being viewed as a measure of the factor of the pounds to the reduction.

In sensory network, a level is obtained by carrying out two procedures on the prior layer. It gives:, where can be the value of the prior layer unit, and are respectively the pounds and the prejudice discussed over. This account activation is usually selected to bring in non linearity in order to solve complex duties. Here we will basically think about that this activation function is usually a sigmoid function. As a consequence the value y of a layer can end up being composed as. This is certainly the so called ahead distribution since this computation goes forwards inside the system. As described earlier, we use a loss function to assess the error that the system does while prediciting. Right here we will think about as a reduction function the squared mistake described as. And however the analytical derivation needs very some attention. Therefore such an analytical method would end up being very difficult to implement for a complex network. In addition, computing sensible this technique would become quite ineffective since we could not really control the fact that the gradients reveal some common description as we will shortly discuss. A way even more easy method to obtain these gradients would be to make use of a statistical approximation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed